NeRFFaceEditing: Disentangled Face Editing in Neural Radiance Fields

Kaiwen Jiang1,2 Shu-Yu Chen1 Feng-Lin Liu1 Hongbo Fu3 Lin Gao1 *

1 Institute of Computing Technology, Chinese Academy of Sciences

2 Beijing Jiaotong University

3 City University of Hong Kong

* Corresponding author

Proc. of SIGGRAPH Asia 2022

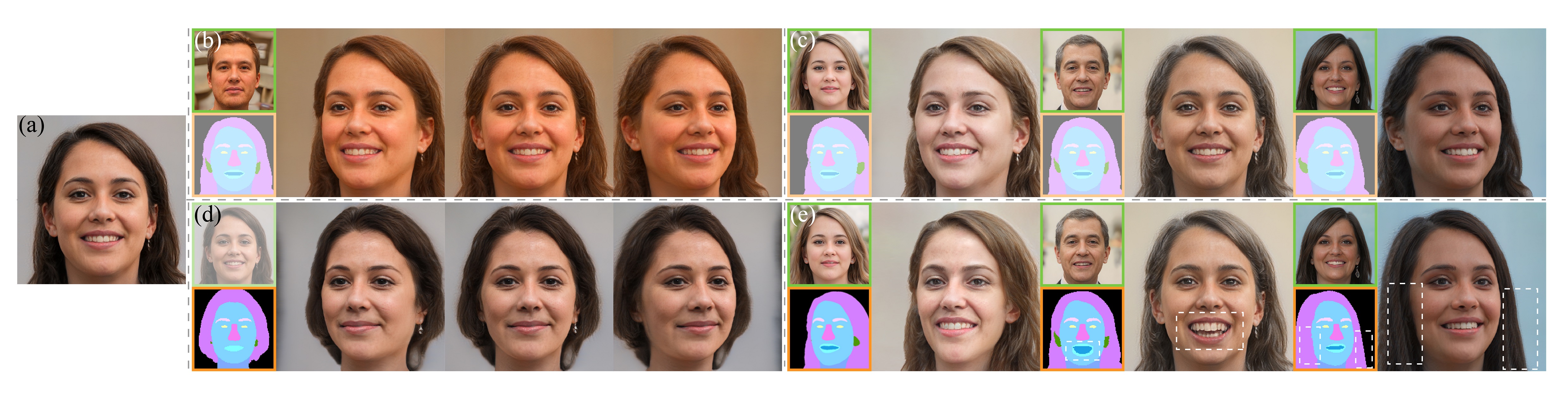

Figure: Our NeRFFaceEditing method allows users to intuitively edit a facial volume to manipulate its geometry and appearance guided by rendered semantic masks. Given an input sample (a), our method disentangles its geometry and appearance, and allows for one- or multi-label editing. We show a range of flexible face editing tasks that can be achieved with our unified framework: (b) changing the appearance according to a given reference sample while retaining the geometry and 3D consistency; (c) changing the appearance for different views with different reference samples while retaining the geometry; (d) editing multiple labels of the semantic mask for a certain view while keeping the appearance and 3D consistency; (e) editing both the geometry and appearance. The inputs used to control the appearance and geometry are highlighted in green and orange boxes, respectively.

Abstract

Recent methods for synthesizing 3D-aware face images have achieved rapid development thanks to neural radiance fields, allowing for high quality and fast inference speed. However, existing solutions for editing facial geometry and appearance independently usually require retraining and are not optimized for the recent work of generation, thus tending to lag behind the generation process. To address these issues, we introduce NeRFFaceEditing, which enables editing and decoupling geometry and appearance in the pretrained tri-plane-based neural radiance field while retaining its high quality and fast inference speed. Our key idea for disentanglement is to use the statistics of the tri-plane to represent the high-level appearance of its corresponding facial volume. Moreover, we leverage a generated 3D-continuous semantic mask as an intermediary for geometry editing. We devise a geometry decoder (whose output is unchanged when the appearance changes) and an appearance decoder. The geometry decoder aligns the original facial volume with the semantic mask volume. We also enhance the disentanglement by explicitly regularizing rendered images with the same appearance but different geometry to be similar in terms of color distribution for each facial component separately. Our method allows users to edit via semantic masks with decoupled control of geometry and appearance. Both qualitative and quantitative evaluations show the superior geometry and appearance control abilities of our method compared to existing and alternative solutions.